|

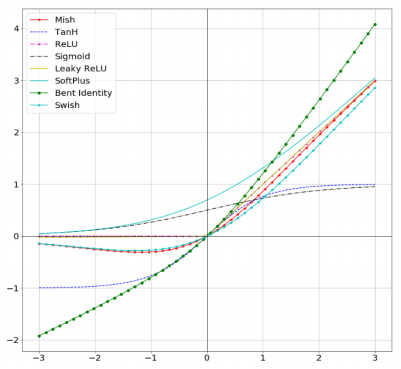

Despite its simplicity of being a piecewise linear function, ReLU has one major benefit compared to sigmoid and tanh: a strong, stable gradient for a large range of values. exp ( - x ) return ( x_exp - neg_x_exp ) / ( x_exp + neg_x_exp )Īnother popular activation function that has allowed the training of deeper networks, is the Rectified Linear Unit (ReLU). exp ( - x )) class Tanh ( ActivationFunction ): def forward ( self, x ): x_exp, neg_x_exp = torch. However, we’ll write our own functions here for a better understanding and insights.įor an easier time of comparing various activation functions, we start with defining a base class from which all our future modules will inherit:Ĭlass Sigmoid ( ActivationFunction ): def forward ( self, x ): return 1 / ( 1 + torch. Of course, most of them can also be found in the torch.nn package (see the documentation for an overview).

Please try to download the file from the GDrive folder, or contact the author with the full output including the following error: \n ", e, )Īs a first step, we will implement some common activation functions by ourselves. urlretrieve ( file_url, file_path ) except HTTPError as e : print ( "Something went wrong. isfile ( file_path ): file_url = base_url + file_name print ( f "Downloading. join ( CHECKPOINT_PATH, file_name ) if not os. for file_name in pretrained_files : file_path = os. makedirs ( CHECKPOINT_PATH, exist_ok = True ) # For each file, check whether it already exists. # Github URL where saved models are stored for this tutorial base_url = "" # Files to download pretrained_files = # Create checkpoint path if it doesn't exist yet os. device ( "cuda:0" ) print ( "Using device", device ) benchmark = False # Fetching the device that will be used throughout this notebook device = torch. manual_seed_all ( seed ) set_seed ( 42 ) # Additionally, some operations on a GPU are implemented stochastic for efficiency # We want to ensure that all operations are deterministic on GPU (if used) for reproducibility torch. is_available (): # GPU operation have separate seed torch. get ( "PATH_CHECKPOINT", "saved_models/Activation_Functions/" ) # Function for setting the seed def set_seed ( seed ): np. get ( "PATH_DATASETS", "data/" ) # Path to the folder where the pretrained models are saved CHECKPOINT_PATH = os. # Path to the folder where the datasets are/should be downloaded (e.g. In case you are on Google Colab, it is recommended toĬhange the directories to start from the current directory (i.e. remove. The needed files will be automatically downloaded. The checkpoint path is the directory where we will store trained model weights and additional files. It is recommended to store all datasets from PyTorch in one joined directory to prevent duplicate downloads. The dataset path is the directory where we will download datasets used in the notebooks. All models here have been trained on an NVIDIA GTX1080Ti.Īdditionally, the following cell defines two paths: DATASET_PATH and CHECKPOINT_PATH. However, note that in contrast to the CPU, the same seed on different GPU architectures can give different results. This allows us to make our training reproducible. We will define a function to set a seed on all libraries we might interact with in this tutorial (here numpy and torch). Set_matplotlib_formats("svg", "pdf") # For export tmp/ipykernel_749/3776275675.py:24: DeprecationWarning: `set_matplotlib_formats` is deprecated since IPython 7.23, directly use `matplotlib_inline.backend_t_matplotlib_formats()` Multi-agent Reinforcement Learning With WarpDrive.Finetune Transformers Models with PyTorch Lightning.PyTorch Lightning CIFAR10 ~94% Baseline Tutorial.GPU and batched data augmentation with Kornia and PyTorch-Lightning.Tutorial 13: Self-Supervised Contrastive Learning with SimCLR.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed